The number of subdomains, in which the geometry will be decomposed, is specified in " system/decomposeParDict", as well as the decomposition method to use. There is, of course, a mechanism that connects properly the calculations, so you don't loose your data or generate wrong results.ĭecomposition and segments building process is handled by decomposeParutility. Then, you start the solver in parallel, letting OpenFOAM to run calculations concurrently on these segments, one processor responding for one segment of the mesh, sharing the data with all other processors in between. That means, for example, if you want to run a case on 8 processors, you will have to decompose the mesh in 8 segments, first. $ cat $HOME/.ssh/id_rsa.pub > $HOME/.ssh/authorized_keysĤ Building an OpenFOAM batch file for parallel processing 4.1 General informationīefore running OpenFOAM jobs in parallel, it is necessary to decompose the geometry domain into segments, equal to the number of processors (or threads) you intend to use. $ mpirun -bind-to core -map-by core -report-bindings snappyHexMesh -overwrite -parallelįor running jobs on multiple nodes, OpenFOAM needs passwordless communication between the nodes, to copy data into the local folders.Ī small trick using ssh-keygen once will let your nodes to communicate freely over rsh.ĭo it once (if you didn't do it already in the past): As the data will be processed directly on the nodes, and may be lost if the job is cancelled before the data is copied back into the case folder.įor example, if you want to run snappyHexMeshin parallel, you may use the following commands: Therefore you may use *HPC scripts, wich will copy your data to the node specific folders after running the decomposePar, and copy it back to the local case folder before running reconstructPar.ĭon't forget to allocate enough wall-time for decomposition and reconstruction of your cases. #Paraview cluster software#OpenFOAM (Open-source Field Operation And Manipulation) is a free, open-source CFD software package with an extensive range of features to solve anything from complex fluid flows involving chemical reactions, turbulence and heat transfer, to solid dynamics and electromagnetics.Īfter loading the desired module, type to activate the OpenFOAM applicationsįor a better performance on running OpenFOAM jobs in parallel on bwUniCluster, it is recommended to have the decomposed data in local folders on each node. 6 OpenFOAM and ParaView on bwUniCluster.5 Using I/O and reducing the amount of data and files.4 Building an OpenFOAM batch file for parallel processing.Mpiexec -np 4 pvbatch -mesa DistributedSphere. The ParaView Python API also supports parallel execution using MPI, in the following example 4 parallel processes are used: module load paraview #Paraview cluster code#To run this example, save the code on the cluster as DistributedSphere.py and call the script on one of the cluster login nodes with the following commands: module load paraview Rep.RescaleTransferFunctionToDataRange(True) The following example script uses the ParaView Python API to generate a sphere and save an image: from paraview.simple import * We reserve the right to kill long-running processes without prior warning if we find that they slow down the login nodes. You can either write such a python script yourself (see ParaView’s Python API documentation) or record the sequence from a ParaView session.Ĭaution: When running a script, keep in mind that you share the login nodes with everyone and do not run compute-intensive tasks for longer periods of time. ParaView offers a Python application programming interface (API) to automate more extensive data processing or reoccuring tasks. More information about foreground and background processes can be found in our Linux tutorial. This is not required, but it allows working with the same console while the ParaView window is open. Note also that in this example, ParaView is launched in the background by appending &. The option -mesa is required because Mesa support is necessary. Then the ParaView GUI can be launched with: module load paraview In order to use ParaView desktop on the OMNI cluster, connect to the cluster login nodes via SSH using X support, i.e. with the -X option. It is capable of rendering images and videos with user defined color and configuration settings. ParaView provides a powerful graphical user interface (GUI) to explore and filter simulation data.

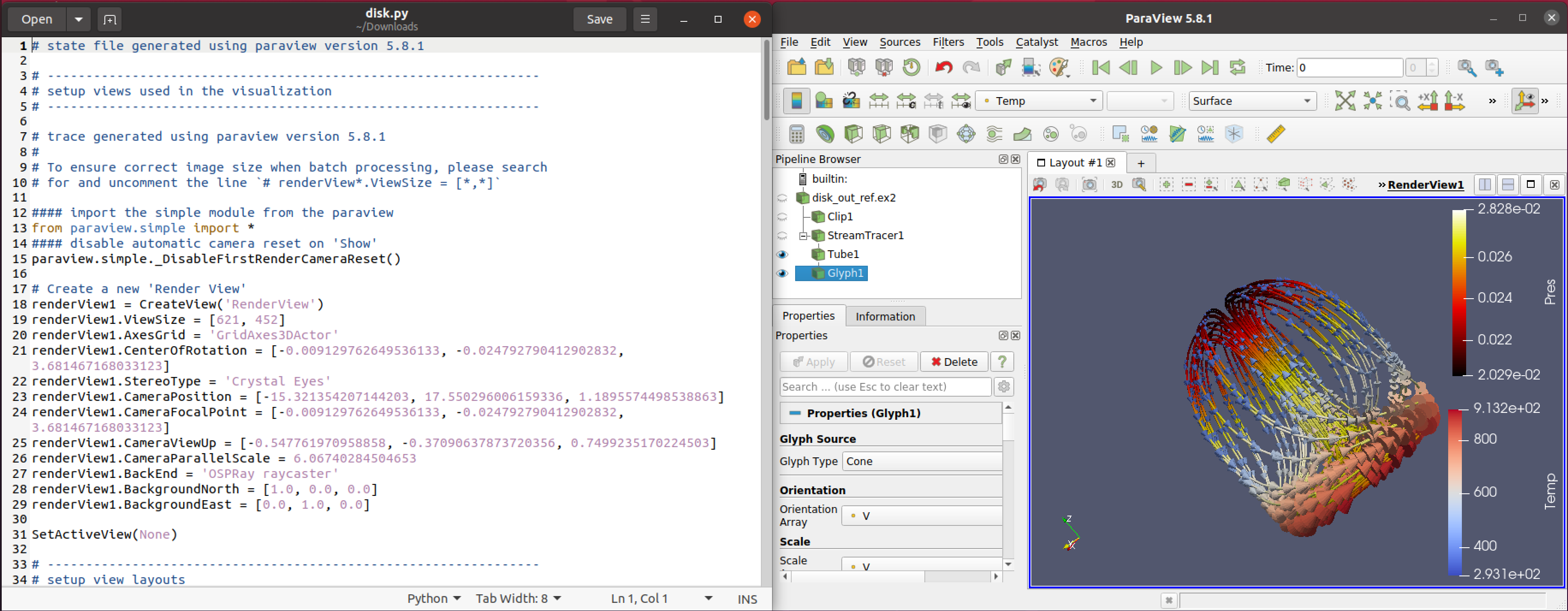

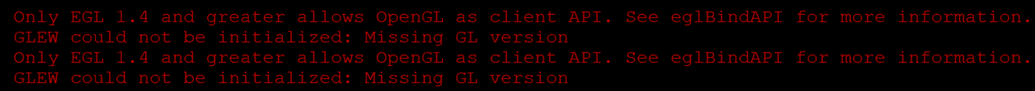

The ParaView documentation can be found here. To use it, you need to load the module paraview: module load paraview ParaView version 5.9.0 is installed on the OMNI cluster. ParaView is an open-source, multi-platform data analysis and visualization application.Ĭaution: Although ParaView is available accross all nodes, the application works only on the login nodes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed